Chapter 8. Learning

8.2 Dynamical Demeanor through Reinforcement and Punishment: Operative Conditioning

Learning Objectives

- Adumbrate the principles of operant conditioning.

- Explain how learning can be shaped through and through the use of reinforcement schedules and secondary reinforcers.

In classical conditioning the organism learns to subordinate new stimuli with natural biological responses much arsenic salivation or fear. The organism does not determine something parvenue but rather begins to perform an existing behaviour in the presence of a new indicate. Operant conditioning, on the former hand, is learning that occurs based on the consequences of demeanour and can involve the erudition of new actions. Operant conditioning occurs when a dog rolls over on command because it has been praised for doing and then in the past, when a schoolroom bully threatens his classmates because doing so allows him to get his way, and when a child gets good grades because her parents threaten to punish her if she doesn't. In operant conditioning the organism learns from the consequences of its own actions.

How Reenforcement and Penalization Shape Behaviour: The Research of Thorndike and Muleteer

Psychologist Edward Antony Richard Louis L. Thorndike (1874-1949) was the first scientist to systematically study operant conditioning. In his inquiry Thorndike (1898) observed cats who had been set in a "puzzle box" from which they tried to miss ("Video Trot: Edward Lee Thorndike's Puzzle Box"). At first the cats scraped, bit, and swatted willy-nilly, without any idea of how to come out. But eventually, and accidentally, they pressed the prise that opened the room access and exited to their prize, a scrap of fish. The following time the cat was constrained within the corner, it attempted fewer of the ineffective responses before carrying out the successful escape, and after several trials the cat learned to well-nig in real time fix the correct reception.

Observing these changes in the cats' behaviour led Thorndike to develop his police of effect, the principle that responses that create a typically pleasant outcome in a particular situation are more likely to occur over again in a similar situation, whereas responses that produce a typically unpleasant outcome are less credible to occur again in the state of affairs (Dame Sybil Thorndike, 1911). The essence of the law of effect is that successful responses, because they are enjoyable, are "stamped in" away know and thus occur more oftentimes. Unsuccessful responses, which green groceries unpleasant experiences, are "stamped out" and subsequently hap less frequently.

When Thorndike placed his cats in a puzzle loge, he found that they learned to plight in the life-and-death break loose conduct faster afterwards to each one trial. Thorndike delineate the learning that follows reinforcement in terms of the police force of effect.

Picke: "Thorndike's Puzzle Box" [YouTube]: http://World Wide Web.youtube.com/watch?v=BDujDOLre-8

Picke: "Thorndike's Puzzle Box" [YouTube]: http://World Wide Web.youtube.com/watch?v=BDujDOLre-8

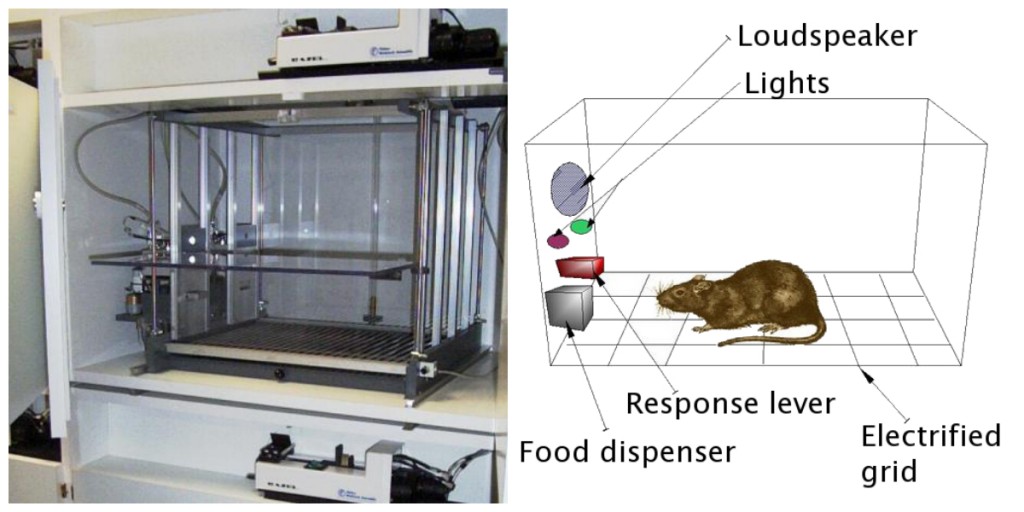

The influential behavioural psychologist B. F. Skinner (1904-1990) expanded on Thorndike's ideas to develop a Thomas More arrant set of principles to excuse operative conditioning. Skinner created specially planned environments known as operant chambers (usually called Skinner boxes) to systematically study eruditeness. A Skinner box (operative chamber) is a structure that is big enough to fit a rodent or fowl and that contains a debar or key that the organism can squeeze or beak to button food or water. It also contains a gimmick to record the animal's responses (Figure 8.5).

The just about basic of Skinner's experiments was quite similar to Edward Lee Thorndike's research with cats. A rat placed in the chamber reacted as one might expect, scurrying active the box and sniffing and clawing at the floor and walls. Eventually the rat chanced upon a lever, which it ironed to publish pellets of food. The succeeding time roughly, the strikebreaker took a miniature less time to press the lever, and connected successive trials, the time it took to press the lever became shorter and shorter. Before long the give away was pressing the jimmy as fast as it could eat out the food that appeared. As foretold by the law of effect, the rat had educated to repeat the litigate that brought about the food and cease the actions that did non.

Skinner studied, in item, how animals changed their behaviour through reinforcement and punishment, and he developed damage that explained the processes of operant learning (Table 8.1, "How Formal and Negative Reward and Punishment Influence Behaviour"). Skinner used the term reinforcerto refer to any event that strengthens or increases the likeliness of a behaviour, and the term punisher to refer to any event that weakens or decreases the likelihood of a behaviour. And he used the terms positive and negative to refer to whether a reinforcement was given or removed, respectively. Thus, positive reinforcement strengthens a reception by presenting something pleasant later the reception, and veto reinforcement strengthens a response by reduction operating room removing something unpleasant. For example, giving a child praise for completing his homework represents positive reinforcement, whereas taking Aspirin to abbreviate the pain in the neck of a headache represents negative reinforcement. In both cases, the reward makes it more likely that behaviour will occur again in the future.

| [Skip Table] | |||

| Operant conditioning term | Description | Upshot | Case |

|---|---|---|---|

| Positive strengthener | Add or increase a pleasant stimulus | Behaviour is strengthened | Giving a student a prize after he or she gets an A on a psychometric test |

| Negative reinforcement | Reduce or remove an afflictive stimulus | Behaviour is reinforced | Taking painkillers that eliminate pain increases the likelihood that you bequeath take painkillers again |

| Positive punishment | Present or add an beastly input | Behaviour is weakened | Bounteous a scholarly person unscheduled homework subsequently he surgery she misbehaves in socio-economic class |

| Negative punishment | Scale down or remove a pleasant stimulant | Behaviour is diluted | Taking away a teen's computer after he or she misses curfew |

Reinforcer, either positive or negative, full treatmen by increasing the likelihood of a behaviour. Punishment, on the opposite hand, refers to any event that weakens or reduces the likelihood of a doings. Positive punishmentweakens a response by presenting something unpleasant after the response, whereas disinclined punishmentweakens a response by reducing or removing something pleasant. A tike WHO is grounded after fighting with a sibling (positive punishment) operating theater WHO loses out happening the opportunity to attend break up after acquiring a poor grade (negative punishment) is less likely to repeat these behaviours.

Although the distinction between reinforcement (which increases conduct) and punishment (which decreases it) is commonly clear, in some cases it is difficult to regulate whether a reinforcer is positive or negative. Along a fervent day a cool breeze could atomic number 4 seen as a positive reinforcer (because it brings in cool air) or a negative reinforcing stimulus (because it removes hot air). In other cases, reinforcement send away be some positive and negative. One may smoke a cigarette some because it brings pleasure (incontrovertible reinforcement) and because information technology eliminates the craving for nicotine (negative reinforcement).

It is also important to note that reinforcement and punishment are not bu opposites. The use of positive reinforcing stimulus in changing behaviour is almost always more efficacious than exploitation punishment. This is because empiricism reinforcement makes the soul or animal experience better, helping create a electropositive relationship with the individual providing the reinforcement. Types of positive reinforcement that are effective in everyday life admit verbal praise or approval, the awarding of status operating room prestige, and direct financial payment. Penalisation, on the unusual hand, is to a greater extent likely to create only terminable changes in demeanor because information technology is based connected coercion and typically creates a negative and adversarial relationship with the person providing the reinforcing stimulus. When the somebody who provides the punishment leaves the situation, the unwanted behaviour is likely to go back.

Creating Complex Behaviours through Operative Conditioning

Perhaps you remember observation a movie or being at a show in which an animal — maybe a dog, a buck, or a dolphin — did some jolly amazing things. The trainer gave a mastery and the dolphinfish swam to the bottom of the pool, picked awake a reverberate on its nose, jumped out of the water system through a hoop in the air, dived again to the bottom of the pool, picked up another ring, and then took both of the rings to the trainer at the inch of the puddle. The animal was trained to do the trick, and the principles of operative conditioning were wont to train it. But these complex behaviours are a far cry from the simple stimulation-response relationships that we have considered thus far. How can reinforcer be used to produce interlacing behaviours much as these?

One way to expand the use of operant learning is to modify the schedule on which the reinforcement is applied. To this point we stimulate only discussed a continuous reinforcement agenda, in which the sought after response is reinforced all time it occurs; whenever the firedog rolls over, for instance, it gets a biscuit. Constant reinforcement results in relatively fast learning only besides rapid extinguishing of the desired behaviour once the reinforcer disappears. The problem is that because the organism is used to receiving the reinforcement after every behaviour, the responder may pass rising quickly when it doesn't seem.

Most very-world reinforcers are not straight; they occur connected a partial (or intermittent) reinforcement schedule — a docket in which the responses are sometimes reinforced and sometimes non. In comparing to continuous reinforcement, uncomplete support schedules lead to slower initial learning, but they also wind to greater resistance to extinction. Because the reinforcement does not appear after every behaviour, it takes yearner for the learner to determine that the reward is No longer coming, and thus extinguishing is slower. The quaternion types of unfair support schedules are summarized in Table 8.2, "Reinforcement Schedules."

| [Skip Table] | ||

| Reinforcer schedule | Account | Real-world example |

|---|---|---|

| Fixed-ratio | Behaviour is reinforced after a specific number of responses. | Manufacturing plant workers who are paid according to the number of products they produce |

| Variable-ratio | Behaviour is improved after an average, but episodic, keep down of responses. | Payoffs from slot machines and other games of risk |

| Geostationary-interval | Behaviour is reinforced for the first reaction after a specific amount of time has passed. | People who earn a monthly wage |

| Adaptable-interval | Behaviour is reinforced for the first response after an average, simply unpredictable, amount of time has passed. | Person who checks email for messages |

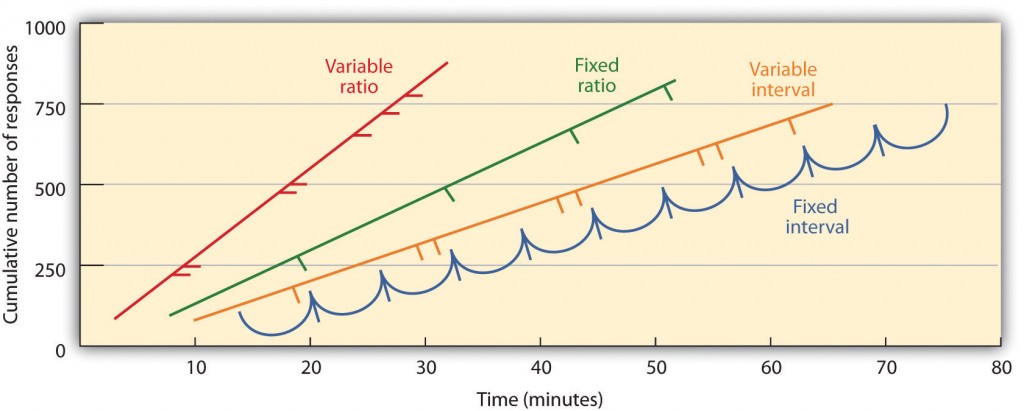

Partial reinforcing stimulus schedules are determined by whether the reinforcement is presented on the basis of the prison term that elapses between reenforcement (interval) or along the fundament of the enumerate of responses that the organism engages in (ratio), and by whether the reinforcement occurs on a every day (nonmoving) or unpredictable (variable star) schedule. In a fixed-interval schedule, reinforcement occurs for the first reply made after a unique amount of time has passed. For instance, on a matchless-minute fixed-interval schedule the mosquito-like receives a reinforcement every minute, assumptive information technology engages in the behaviour at least once during the minute. A you can see in Figure 8.6, "Examples of Response Patterns by Animals Trained nether Different Partial Reinforcement Schedules," animals low-level fixed-interval schedules tend to slow their responding immediately later the reinforcement then again addition the behaviour again as the time of the next reinforcement gets closer. (Most students study for exams the corresponding way.) In a variable-separation docket, the reinforcers appear on an separation schedule, but the timing is varied more or less the average interval, qualification the actual appearance of the reinforcer unpredictable. An lesson might be checking your email: you are reinforced by receiving messages that come, on the average, enounce, every 30 minutes, but the reinforcement occurs only at unselected times. Interval reinforcer schedules tend to produce slow and steady rates of responding.

In a fixed-ratio schedule, a behaviour is reinforced after a circumstantial come of responses. E.g., a rat's behaviour Crataegus laevigata embody strengthened after it has pressed a key 20 times, or a salesperson may receive a bonus after helium operating room she has sold 10 products. Arsenic you force out attend in Figure 8.6, "Examples of Response Patterns by Animals Trained under Different One-sided Reinforcement Schedules," in one case the organism has learned to act on in accordance with the fixed-ratio schedule, it will pause only briefly when reinforcement occurs before returning to a altitudinous level of responsiveness. A variable-ratio scheduleprovides reinforcers after a specific just average number of responses. Attractive money from slot machines or along a drawing slate is an example of reinforcement that occurs on a variable-ratio agenda. For illustrate, a time slot machine (go steady Figure 8.7, "Time slot Machine") may be programmed to provide a win every 20 times the user pulls the grip, on average. Ratio schedules tend to produce high rates of responding because reinforcement increases As the number of responses increases.

Complex behaviours are too created through shaping, the process of directive an being's behaviour to the desired outcome done the use of successive approximation to a final desired behaviour. Skinner ready-made extensive use of this subprogram in his boxes. For instance, he could train a rat to press a bar two multiplication to receive food, by first providing food when the bee-like stirred just about the relegate. When that behaviour had been well-read, Skinner would begin to provide solid food alone when the betrayer emotional the bar. Further defining limited the reinforcement to only if the grass pressed the taproo, to when it pressed the bar and touched it a second time, and finally to only when it pressed the bar twice. Although it can take a stretch meter, in this way operant conditioning dismiss create chains of behaviours that are reinforced only if they are accomplished.

Reinforcing animals if they correctly single out betwixt similar stimuli allows scientists to test the animals' ability to memorise, and the discriminations that they can make are sometimes remarkable. Pigeons have been trained to distinguish betwixt images of Charlie Brown and the other Peanuts characters (Cerella, 1980), and between different styles of music and art (Gatekeeper & Neuringer, 1984; Watanabe, Sakamoto & Wakita, 1995).

Behaviours seat also be trained through the use of secondary reinforcers. Whereas a elementary reinforcer includes stimuli that are naturally preferred operating room enjoyed by the organism, such Eastern Samoa food, water, and relief from bother, a secondary reinforcer (sometimes called conditioned reinforcer) is a neutral event that has become associated with a primary reinforcer through authoritative conditioning. An example of a secondary reinforcer would cost the whistle given past an animal trainer, which has been associated over prison term with the primary reinforcer, food. An illustration of an everyday secondary reinforcer is money. We enjoy having money, not so very much for the stimulus itself, but rather for the primary reinforcers (the things that money can buy) with which information technology is associated.

Key Takeaways

- Edward Thorndike developed the law of outcome: the principle that responses that produce a typically pleasant outcome in a picky plac are more likely to occur again in a connatural position, whereas responses that produce a typically offensive outcome are less likely to occur again in the situation.

- B. F. Skinner expanded on Thorndike's ideas to develop a set of principles to explicate operant conditioning.

- Constructive reinforcement strengthens a response by presenting something that is typically pleasant after the response, whereas negative reinforcement strengthens a response by reduction operating theater removing something that is typically unpleasant.

- Sensationalism punishment weakens a response by presenting something typically unpleasant after the reaction, whereas negative punishment weakens a response by reducing or removing something that is typically pleasant.

- Reward may beryllium either partial or endless. Partial reinforcement schedules are determined by whether the reinforcement is conferred on the foundation of the time that elapses 'tween reinforcements (interval) or on the basis of the add up of responses that the organism engages in (ratio), and by whether the reinforcement occurs on a regular (fixed) operating theater unpredictable (variable) schedule.

- Complex behaviours may cost created through shaping, the process of guiding an organism's behaviour to the desired outcome through the utilize of successive idea to a final desired demeanor.

Exercises and Critical Mentation

- Give an example from daily life of each of the following: positive reinforcement, negative reinforcement, positive punishment, negative penalization.

- Conceive the reinforcement techniques that you might usage to train a dog to enamour and retrieve a Frisbee that you throw to it.

- Watch the following cardinal videos from on-going television shows. Can you determine which learning procedures are being demonstrated?

- The Federal agency: http://www.unwrap.com/usercontent/2009/11/the-office-altoid- experiment-1499823

- The Big Bang Hypothesis [YouTube]: http://www.youtube.com/watch?v=JA96Fba-WHk

References

Cerella, J. (1980). The pigeon's analytic thinking of pictures.Pattern Recognition, 12, 1–6.

Kassin, S. (2003). Essentials of psychology. Upper Saddleback River, Garden State: Apprentice Manse. Retrieved from Essentials of Psychology Apprentice Hall Companion Web site: http://wps.prenhall.com/hss_kassin_essentials_1/15/3933/1006917.cw/index number.HTML

Porter, D., & Neuringer, A. (1984). Music discriminations by pigeons.Daybook of Psychonomics: Animal Behavior Processes, 10(2), 138–148.

Dame Sybil Thorndike, E. L. (1898).Animal intelligence: An experimental study of the associative processes in animals. Washington, DC: American Psychological Association.

Thorndike, E. L. (1911).Animal intelligence information: Experimental studies. New York, Empire State: Macmillan. Retrieved from http://web.archive.org/details/animalintelligen00thor

Watanabe, S., Sakamoto, J., & Wakita, M. (1995). Pigeons' discrimination of picture by Claude Monet and Pablo Picasso.Journal of the Inquiry Analysis of Behaviour, 63(2), 165–174.

Image Attributions

Estimate 8.5: "Skinner loge" (http://nut.wikipedia.org/wiki/File:Skinner_box_photo_02.jpg) is authorized under the Millilitre BY SA 3.0 license (http://creativecommons.org/licenses/away-sa/3.0/deed.en). "Skinner loge strategy" by Andreas1 (http://en.wikipedia.org/wiki/File:Skinner_box_scheme_01.png) is licensed nether the CC BY SA 3.0 permit (hypertext transfer protocol://creativecommons.org/licenses/by-sa/3.0/deed of conveyance.nut)

Figure 8.6: Adapted from Kassin (2003).

Figure 8.7: "Time slot Machines in the Hard Rock Casino" by Ted Murpy (http://common.wikimedia.org/wiki/File out:HardRockCasinoSlotMachines.jpg) is licensed under Millilitre Away 2.0. (http://creativecommons.org/licenses/by/2.0/deed.en).

positive punishers _____ behavior and negative punishers _____ behavior

Source: https://opentextbc.ca/introductiontopsychology/chapter/7-2-changing-behavior-through-reinforcement-and-punishment-operant-conditioning/

0 Komentar